Agentic AI at work

Where enterprise value is real - and where autonomy becomes risk

An agentic AI system does more than generate text. It can plan, decide, and act across multiple steps using tools, data sources, workflows, and sometimes other agents. In enterprise terms, this means the system can move from “answering a question” to executing work.

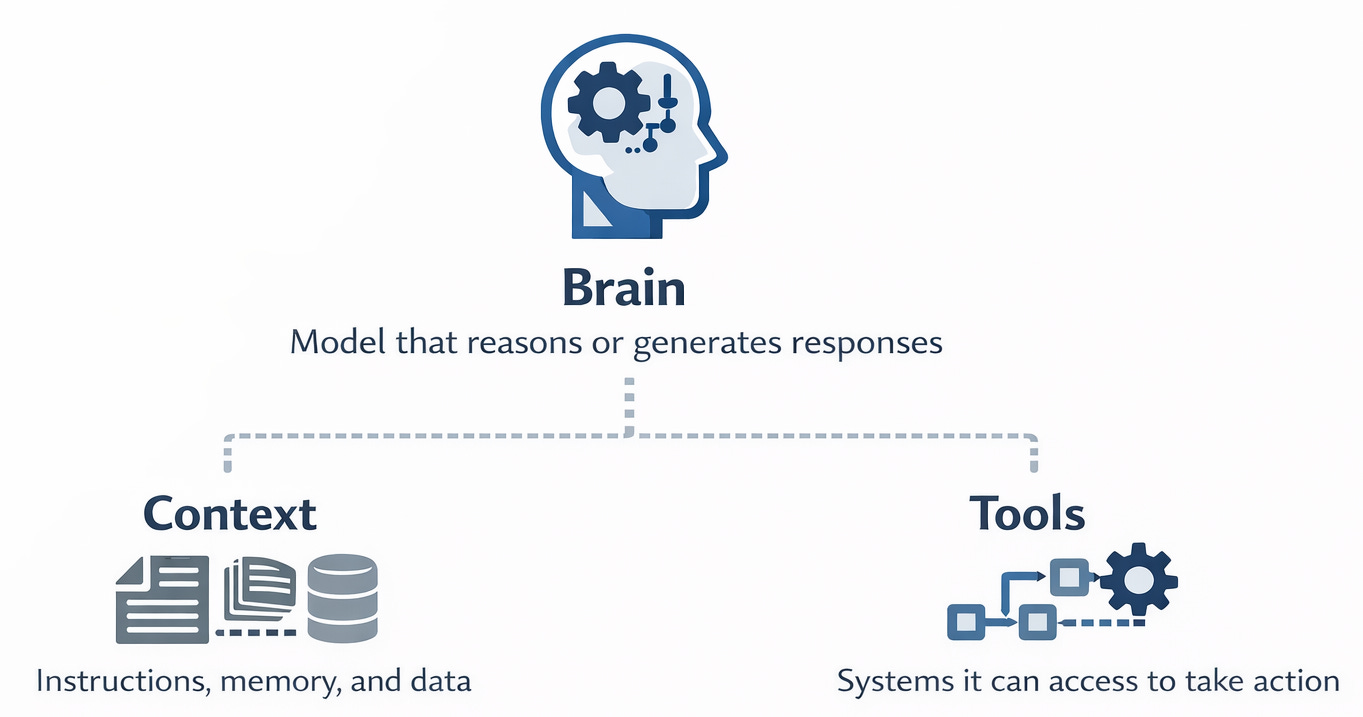

A simple way to think about agentic AI is:

Brain: The model that reasons or generates response.

Context: The instructions, memory, and data it uses

Tools: The systems it can access to take action

That distinction matters because the main risk is no longer just inaccurate output like we have seen it in the last couple of years due to non-deterministic system behavior.

The bigger issue is unsafe or uncontrolled action.

Where agentic AI makes sense

While the industry is telling you it is the next big thing of operational efficiency, Agentic AI is most useful when work is:

multi-step, not one-shot

repetitive but not highly judgment-sensitive

tool-enabled, but within clear limits

bounded in scope

easy to supervise, interrupt, or reverse

Agentic AI use cases in an enterprise

These are typically the most effective starting points: Summarizing emails, documents, tickets, or meetings; researching approved internal knowledge sources; drafting reports, RFP responses, or internal communications; gathering information across systems for analysts; triaging support requests before human review; and coordinating routine workflow steps across approved tools.

These use cases tend to perform well because the impact of failure remains limited, and actions can usually be reviewed, validated, or reversed if necessary.

Where agentic AI is higher risk

Risk rises sharply when an agent can take actions that are privileged, external, irreversible, or difficult to detect quickly. High-risk situations include moving money or issuing refunds, approving transactions or business decisions, modifying authoritative records in ERP, HR, finance, or CRM systems, sending messages as a trusted employee or executive, changing access rights or interacting with IAM or PAM systems, executing code, scripts, database commands, or infrastructure changes, browsing untrusted websites or using unvetted third-party tools, and making decisions that affect customers, employees, or regulated outcomes.

In these cases, the issue extends beyond model quality. The real risk comes from the combination of autonomy, tool access, and business impact. To identify areas of high risk, I recommend to look into the EU AI Act Annex III to source an extensive list of what is being considered as high-risk AI system.

Another rule of thumb for daily operations is that an AI agent becomes materially more dangerous when it is connected to: Payment or treasury systems; ERP, HR, CRM, or ticketing systems with write access; email or collaboration tools with send/delete capability; admin consoles or privileged APIs shells, Python, PowerShell, CI/CD pipelines, or database tools; browsers operating in authenticated sessions; external plugins, connectors, MCP servers, or other agent-to-agent interfaces.

A read-only assistant is very different from an agent that can send, change, approve, execute, or delete.

To sum it up, the most practical rule of thumb is the following:

Use ‘classic’ GenAI or (even Machine Learning) when:

the task is one-shot

no tools are needed

the output is advisory only

a human will perform the actual action

Use a bounded agent when:

the task requires several steps

only a small, approved toolset is needed

the work is narrow and reversible

there is clear ownership and monitoring

Use a tightly governed agent with human approval when:

money, legal exposure, customer outcomes, regulated data, or critical operations are involved

rollback is difficult

misuse could create material business harm

So, can these guardrails be narrowed down into basic principles?

Yes, of course.

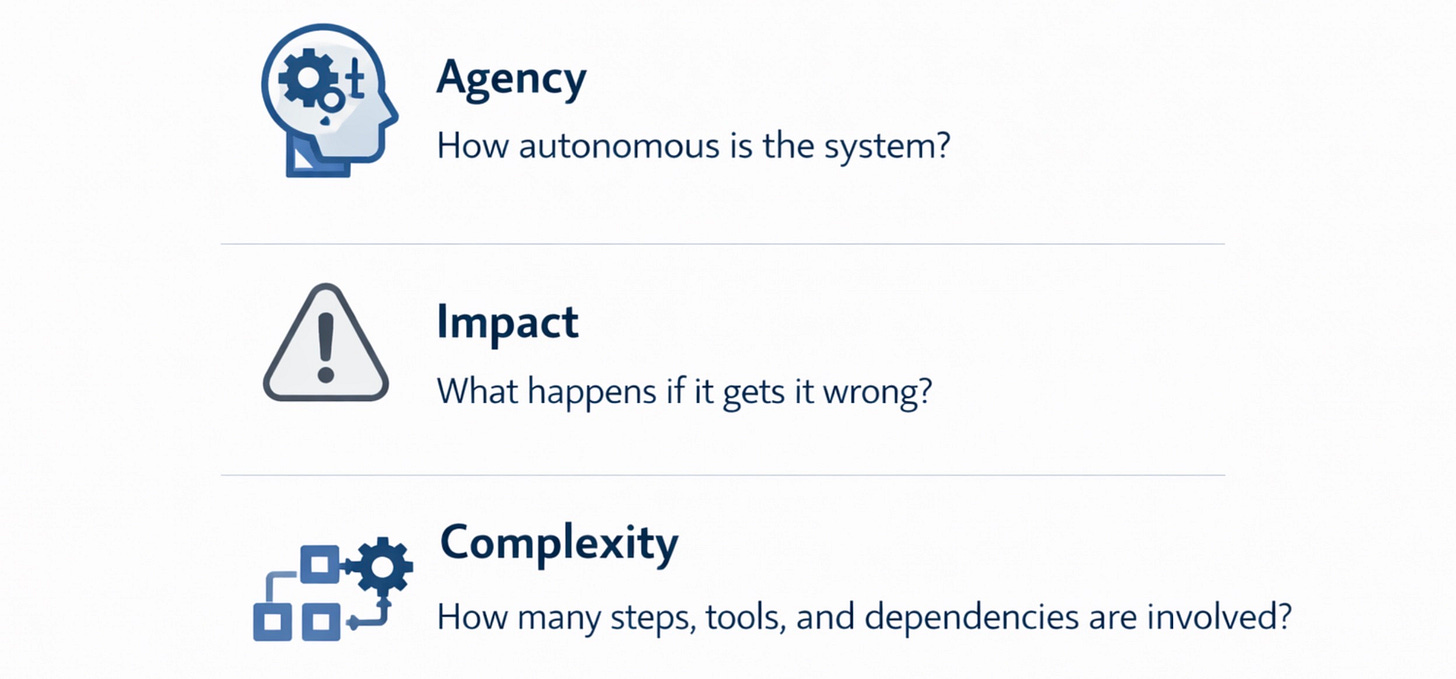

Agentic AI usually makes sense when three factors stay controlled:

Agency: How autonomous is the system?

Impact: What happens if it gets it wrong?

Complexity: How many steps, tools, and dependencies are involved?

The higher these go, the stronger the controls and guardrails must be.

How to implement Agentic AI safely in an enterprise

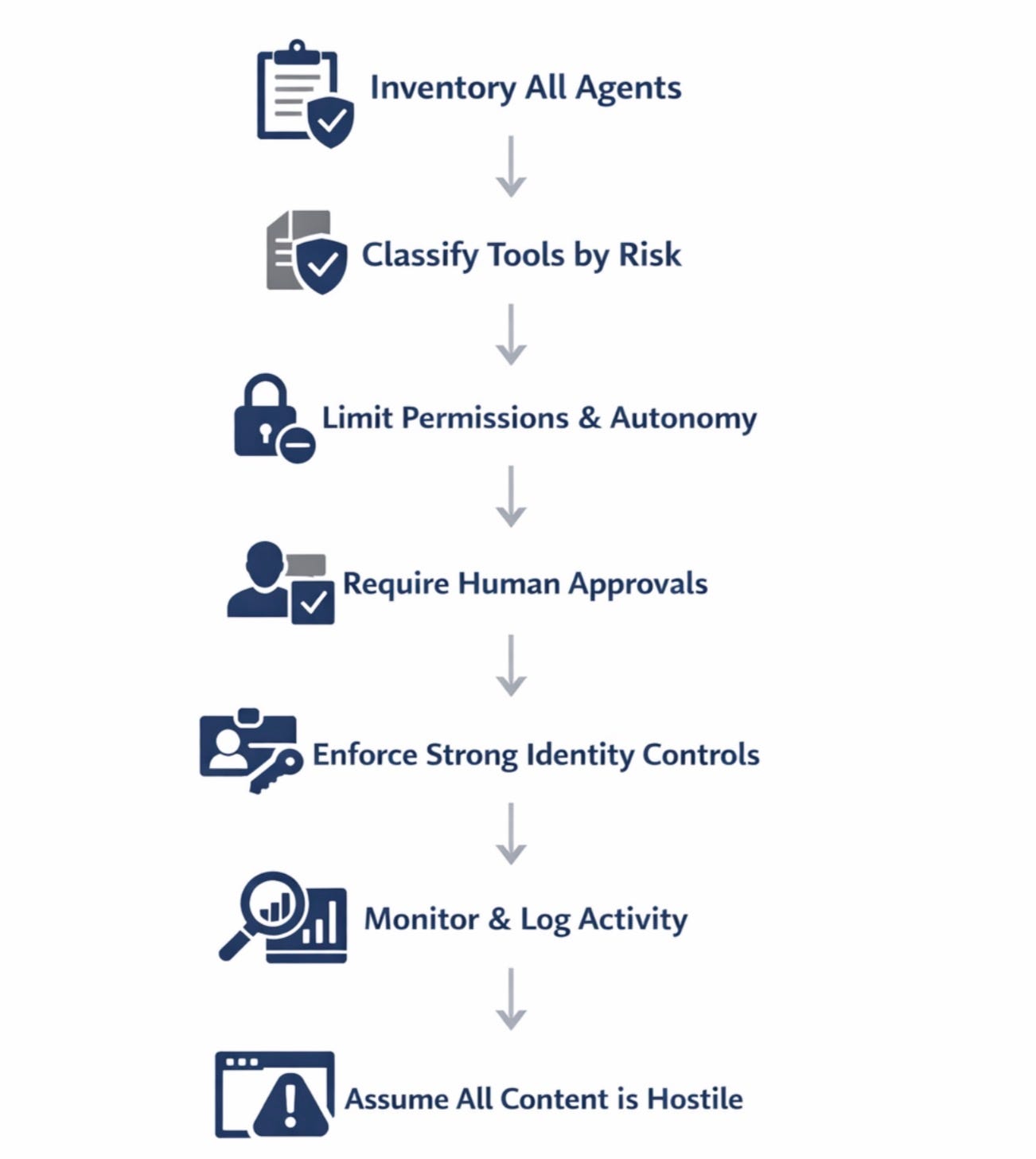

Begin by creating a clear inventory of all agents, documenting each agent’s purpose, ownership, underlying model, data access, available tools, and assigned risk rating. From there, classify the tools those agents can use according to their level of risk, distinguishing between read-only capabilities and those that can write, approve, or execute actions. Apply the principles of least privilege and least agency by limiting each agent’s permissions and autonomy to only what is strictly necessary for its role. Introduce human approval at critical control points, ensuring that any high-impact action is reviewed before execution.

Strengthen identity management by treating agents as non-human identities with unique credentials that can be quickly revoked if needed. Maintain comprehensive monitoring and logging of all agent activity, including tool usage, goal changes, privilege escalation, and any unusual behavior. Finally, operate with the assumption that all connected content, whether from web pages, documents, emails, APIs, or even other agents, may be malicious and design safeguards accordingly.

The bottom line

Agentic AI works best when it acts like a well-supervised junior operator: Focused mission, approved tools, visible activity, and easy human intervention.

It becomes risky when it acts like an unsupervised privileged employee: Broad permissions, external connectivity, code execution, money movement, or decisions affecting people.

Use agents for coordination, preparation, and controlled execution. Use humans for approval, exceptions, and irreversible actions - and your chances of applying Agentic AI successfully will rise significantly.

The "easy to supervise, interrupt, or reverse" criteria is the one that most enterprise teams skip in their rush to ship. You end up with agents that work great in demos but have no graceful failure mode when they hit an unexpected state.

What I have found useful is defining action tiers before building - what the agent can do alone, what needs a human checkpoint, and what it should never attempt without explicit approval. Basically treating it like onboarding a contractor: write the rules of engagement first, not after the first incident.